Episode 33 — Bias and Fairness in AI

No issue highlights AI’s societal impact more sharply than bias and fairness. This episode begins by defining bias in AI systems and tracing its sources to data, algorithms, and human choices. We explore data bias, such as underrepresentation of certain groups, and algorithmic bias, where optimization reinforces inequities. Examples include facial recognition systems with unequal error rates, hiring algorithms reproducing gender or racial bias, and predictive policing that amplifies systemic inequalities. These cases show how AI can unintentionally reflect and magnify existing social problems, undermining trust and fairness.

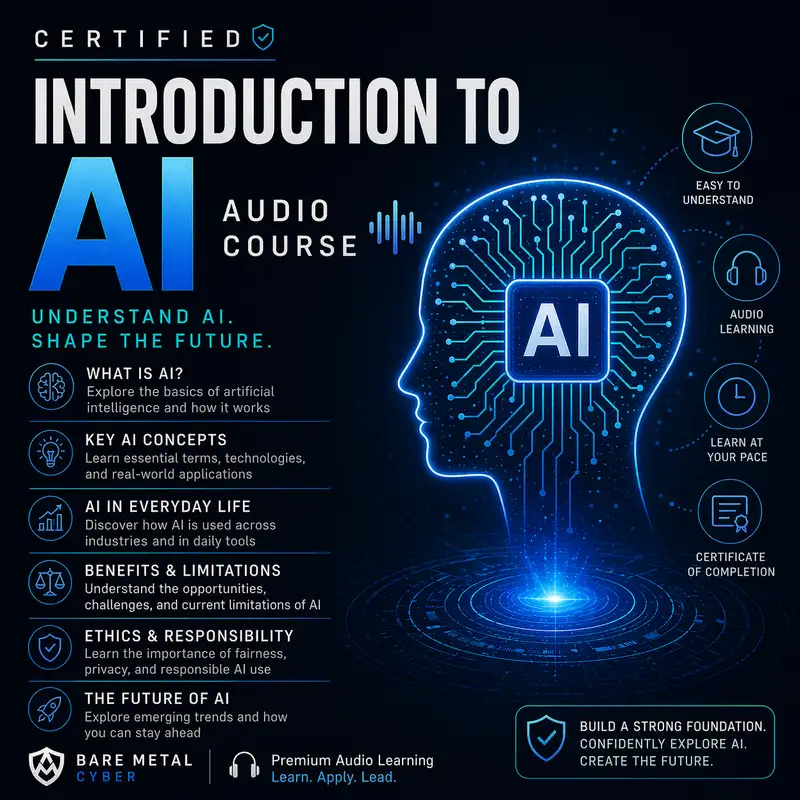

We then shift to the methods and principles for addressing bias. Technical strategies include balancing datasets, adjusting algorithms with fairness constraints, and post-processing results to improve equity. Governance approaches involve transparency practices like datasheets and model cards, accountability frameworks, and independent audits. Fairness is not universal, so cultural and legal contexts shape what equitable AI looks like across different societies. Ultimately, fairness in AI is not just a technical problem but a moral and political challenge. By the end of this episode, listeners will appreciate that mitigating bias requires vigilance, interdisciplinary cooperation, and commitment to building systems that serve all users equitably. Produced by BareMetalCyber.com, where you’ll find more cyber prepcasts, books, and information to strengthen your certification path.