Episode 21 — Common Pitfalls and Bias in AI Systems

AI systems are only as good as the data and assumptions that shape them, and many fail because of recurring pitfalls. This episode outlines the most common problems, starting with poor data quality, unbalanced datasets, and labeling errors. We’ll discuss sampling bias, measurement bias, and the use of proxy variables that inadvertently encode sensitive traits. Overfitting, underfitting, and automation bias — where humans over-trust machine outputs — are introduced as technical and human pitfalls alike.

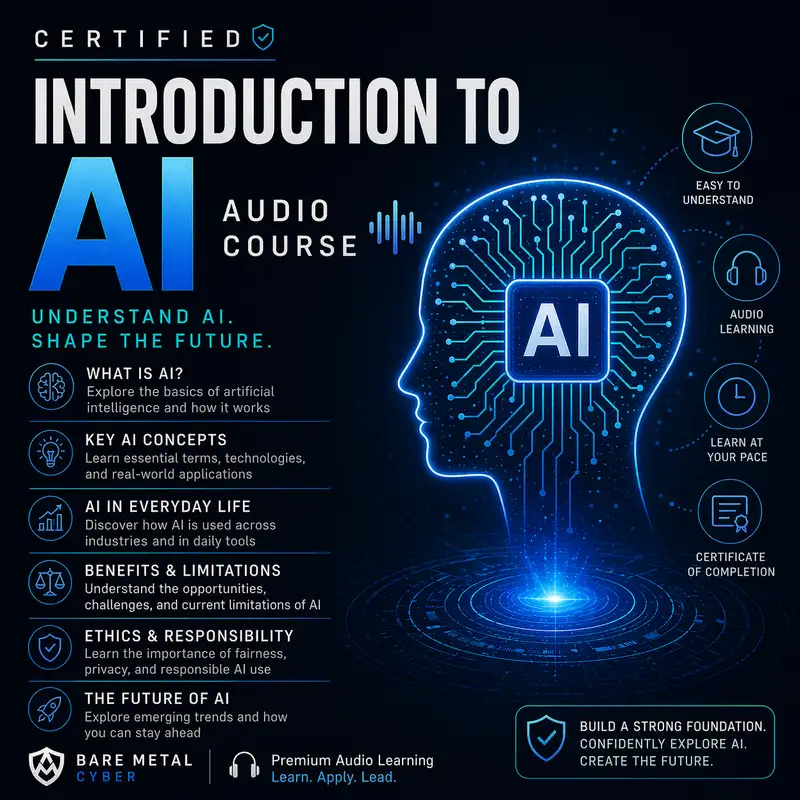

We then focus on bias as a deeper issue. Historical inequalities embedded in data can create systems that reinforce discrimination, from facial recognition tools with unequal accuracy to hiring algorithms that favor certain demographics. We cover strategies for detecting and mitigating bias, including pre-processing corrections, algorithmic adjustments, and post-processing interventions. Governance, documentation, and human oversight are emphasized as necessary complements to technical fixes. By the end, listeners will understand that building fair and trustworthy AI requires vigilance not just during design, but throughout deployment and use. Produced by BareMetalCyber.com, where you’ll find more cyber prepcasts, books, and information to strengthen your certification path.